- Blog

- AI-Generated Code: How Copilot and Claude Change Dev

AI-Generated Code: How Copilot and Claude Change Dev

In just two years, AI coding assistants have moved from novelty to necessity. GitHub Copilot crossed a million paying users, and Claude Code rose quickly with stronger reasoning. These systems aren’t autocomplete—they infer intent, reason across files, and draft functions, tests, and docs. The result is a fundamental shift: less time spent typing syntax, more time directing, validating, and integrating.

- Table of contents

Why this shift matters

AI-generated code changes the unit of work. Instead of line-by-line assistance, developers can request capabilities and receive coherent drafts—functions, tests, migrations, even release notes.

Key implications:

- Throughput and focus: Early studies show 30–55% productivity gains. More important, engineers spend time on architecture, constraints, and acceptance criteria rather than recall and boilerplate.

- Code as conversation: The development loop becomes iterative prompts, critiques, and refinements—closer to pair programming than single-author coding.

- Quality leverage: With tests and docs generated alongside code, teams can maintain higher standards if they enforce review and verification.

How AI assistants work differently

Traditional autocomplete predicts the next token from local context. Modern assistants:

- Model intent: They interpret goals from natural language, issues, and comments, not just code around the cursor.

- Reason over scope: With larger context windows and repository-aware tools, they trace dependencies and propose consistent changes across files.

- Produce artifacts: They can emit code, tests, docs, and even commit messages in a single run.

- Sustain dialogue: The best results come from iterative, critique-driven chat, not one-shot prompts.

Limitations to respect:

- Hallucinations: Confident but incorrect APIs or patterns.

- Partial context: Without proper grounding (e.g., your codebase docs), assistants generalize from public patterns.

- Non-determinism: Slightly different drafts across runs; lock quality with tests and reviews.

Measuring productivity beyond speed

Raw speed matters, but durable gains come from improved flow and quality. Track:

- Lead time for changes: Idea → merged PR.

- Review throughput: PRs per reviewer per week; average review iterations.

- Suggestion acceptance rate: Percentage of AI proposals merged with minimal edits.

- Defect escape rate: Bugs found post-merge; severity distribution.

- Coverage and test debt: Change in unit/integration test coverage.

Practical measurement plan:

- Baseline: Capture 4–6 weeks of pre-adoption metrics per team.

- Controlled rollout: A/B by squad or feature area; rotate tools to isolate effects.

- Guardrail KPIs: Block AI adoption if defects or incident minutes trend upward.

- Qualitative logs: Weekly retros on friction points, prompt patterns, and code review pain.

New skills and roles

As the reference trend shows, companies now value problem-solving and system thinking over rote syntax.

Shifts for engineers:

- From recall to constraints: Define interfaces, edge cases, and performance envelopes.

- From typing to reviewing: Critique drafts for invariants, readability, and security.

- From solo to orchestration: Stitch AI outputs into cohesive designs and pipelines.

Emerging roles:

- AI Engineer: Owns prompt patterns, tool integration, evaluation harnesses, and safety rails.

- Code Quality Lead: Curates review checklists tailored to AI artifacts and enforces test policies.

- Developer Productivity (DevProd): Tunes IDE integrations, telemetry, and model settings.

Safe and reliable AI coding

Adopt assistants with explicit controls, not blind trust.

Risk areas and mitigations:

- Security: Require secret scanning, SAST/DAST, and dependency checks on all AI-generated code.

- Licensing: Enable license scanners; block disallowed licenses and ensure provenance in SBOMs.

- Privacy: Restrict external context sharing; use on-prem or privacy-preserving configurations.

- Consistency: Enforce style and design rules via linters and architectural lints.

Review checklist for AI-generated diffs:

- Correctness: Edge cases, error handling, and data invariants.

- Test completeness: Positive/negative cases, property tests, performance baselines.

- Observability: Logs, metrics, and alerts for critical paths.

- Docs: Inline comments and user-facing docs updated.

Workflows that work

The biggest wins come from structured prompts and evaluation.

- Test-first prompting

- Specify acceptance criteria and edge cases.

- Ask the assistant to draft tests first, then code until tests pass.

- Iterate on failures; capture flaky patterns in a prompt library.

- Repo-aware refactor

- Start with a short design note: goal, constraints, files affected.

- Ask for a step plan before code changes.

- Apply changes in small PRs; let the assistant update imports and docs.

- Spec-to-code from ADRs

- Feed architecture decision records or RFCs as context.

- Request interface stubs, adapters, and integration tests aligned to the ADR.

- Have the assistant generate a migration plan and rollout checklist.

- Incident-to-fix loop

- Paste sanitized logs and failing traces.

- Ask for hypotheses, then minimal repro tests, then a contained fix.

- Generate a post-incident doc with learnings and prevention steps.

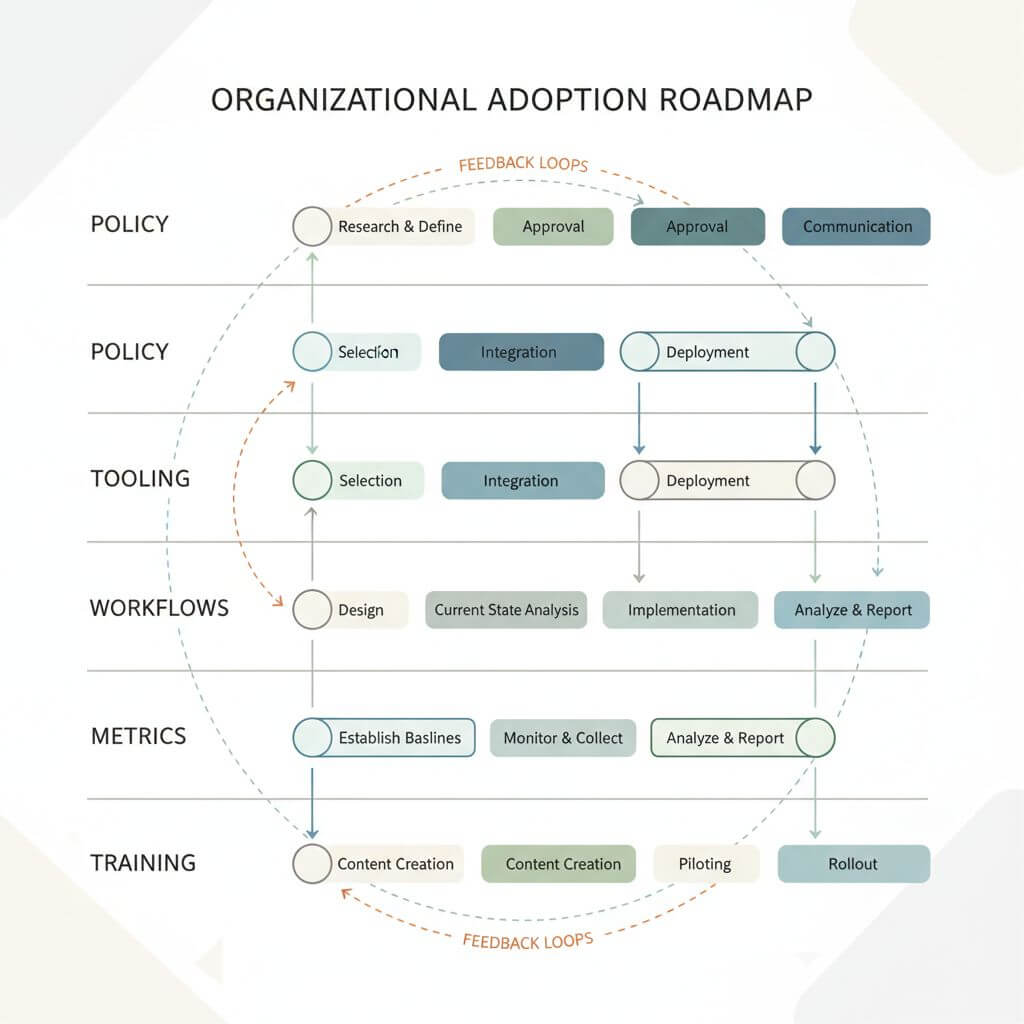

Organizational adoption roadmap

- Policy and tooling

- Define approved assistants and privacy settings.

- Standardize linters, scanners, and CI gates for AI-generated diffs.

- Playbooks and libraries

- Publish prompt patterns per stack (backend, frontend, data, mobile).

- Maintain reusable spec templates, test checklists, and PR descriptions.

- Training and onboarding

- Pair sessions on critique-driven prompting and safe review.

- Shadow reviews to calibrate quality bars across teams.

- Metrics and review cadence

- Weekly dashboards of speed and quality KPIs.

- Monthly audits of defects, security findings, and coverage.

- Hiring and career paths

- Evaluate for system design, debugging, and data literacy over syntax trivia.

- Recognize AI engineering contributions in promotion criteria.

Future outlook

AI will not replace developers; it will elevate them. Expect:

- More repo-native reasoning: Full-project refactors and reliable multi-file changes.

- Richer IDE agents: Intent-aware background tasks, guided migrations, and automatic test generation.

- Natural-language PRs: Specs, diffs, and rationale co-authored with the assistant.

- Continuous maintenance: Agents that keep dependencies fresh and configs secure under human oversight.

The developers who thrive will direct, validate, and iterate—treating AI as a capable pair, not an oracle.

FAQ

- Will AI replace developers?

- No. It shifts focus to design, constraints, and validation. Teams still own correctness, safety, and product sense.

- How do we start safely?

- Pilot with non-critical services, enforce CI gates, and use a test-first workflow. Measure and expand.

- What languages benefit most?

- Popular stacks (JavaScript/TypeScript, Python, Java, Go) see the fastest gains due to rich training data and tooling.

- How do we prevent leaked secrets?

- Mask sensitive logs, disable external context sharing when needed, and use enterprise offerings with privacy controls.

- How do we evaluate tools?

- Run head-to-head tasks with your codebase: correctness rate, edit distance to final, test pass rate, and developer satisfaction.

- How do we avoid over-reliance?

- Keep humans in the loop: mandatory reviews, test-driven prompts, and incident drills that practice manual debugging.

Conclusion

Copilot, Claude, and their peers are reshaping software development—from typing to directing, from recall to reasoning. The winning teams will build safety rails, measure outcomes, and cultivate new skills around critique and orchestration. The future isn’t AI vs. developers—it’s developers amplified by AI.

If you need to quickly convert webpages to PDF or Markdown during research or documentation, you can try URL to Any.